Multi-agent AI. Your cloud. No lock-in. No excuses.

The Agentic Command Center orchestrates them. Chat puts them in front of every user, Workflow Builder lets anyone author them, and Embedded Agents drop them into ServiceNow and VS Code. Hosted on your AWS, Azure, or on-prem Kubernetes — every major LLM, swap-in ready.

Customer-Hosted

Your VPC · Your Data

Multi-Agent

Auto-sharded at runtime

Every major LLM

OpenAI, Anthropic, Google, Meta, Mistral, Cohere, xAI, Nova — plus any self-hosted model

Customer-hosted

Deploys to your AWS, Azure, or on-prem Kubernetes — your account, your VPC, your data

Audit-ready

Append-only audit log, scoped API keys, signed webhooks, SAML 2.0 / OIDC / SCIM

Compliance-aligned

SOC 2 · HIPAA · PCI · ISO 27001 · FedRAMP High aligned · NIST 800-53 · IL5 in production on GovCloud · air-gapped & IL6+ ready

Built without ceilings

Any LLM. Unlimited nodes. Four products.

Open by design. Pick the model that fits, build the node you need, ship one product or all four — under one platform you own.

Any

LLM you can connect

Frontier or open-source. OpenAI, Anthropic, Bedrock, Azure, GovCloud, or self-hosted via vLLM, Ollama, llama.cpp. Per-role routing, swap in a config table.

Unlimited

nodes you can build

Compose any workflow with the plugin SDK. Author custom nodes in hours, not quarters. No closed catalog, no artificial caps.

Four

products that ship together

The Agentic Command Center, Chat, Workflow Builder, and Embedded Agents for ServiceNow and VS Code. Start with one, light up the rest the moment you need them.

What makes L2H different

Built for production. Built for ownership.

Vendor-agnostic on LLMs

Connect every major frontier model — OpenAI, Anthropic Claude, Google Gemini, Meta Llama, Mistral, Cohere, Amazon Nova, xAI Grok — through OpenAI, Azure OpenAI, AWS Bedrock, Google AI, xAI, and any OpenAI-compatible endpoint (vLLM, llama.cpp, Ollama, OpenRouter, Together, Groq). Per-role routing, no lock-in.

Production-ready on day one

Append-only audit log, scoped + expiring API keys, signed outbound webhooks with SSRF guard, workload-identity-bound cloud access, secret-store integration, hardened containers.

Self-describing workflows

Every workflow exposes input and output JSON Schemas plus an example payload. Any AI agent can discover, validate, and call your workflows without prior knowledge.

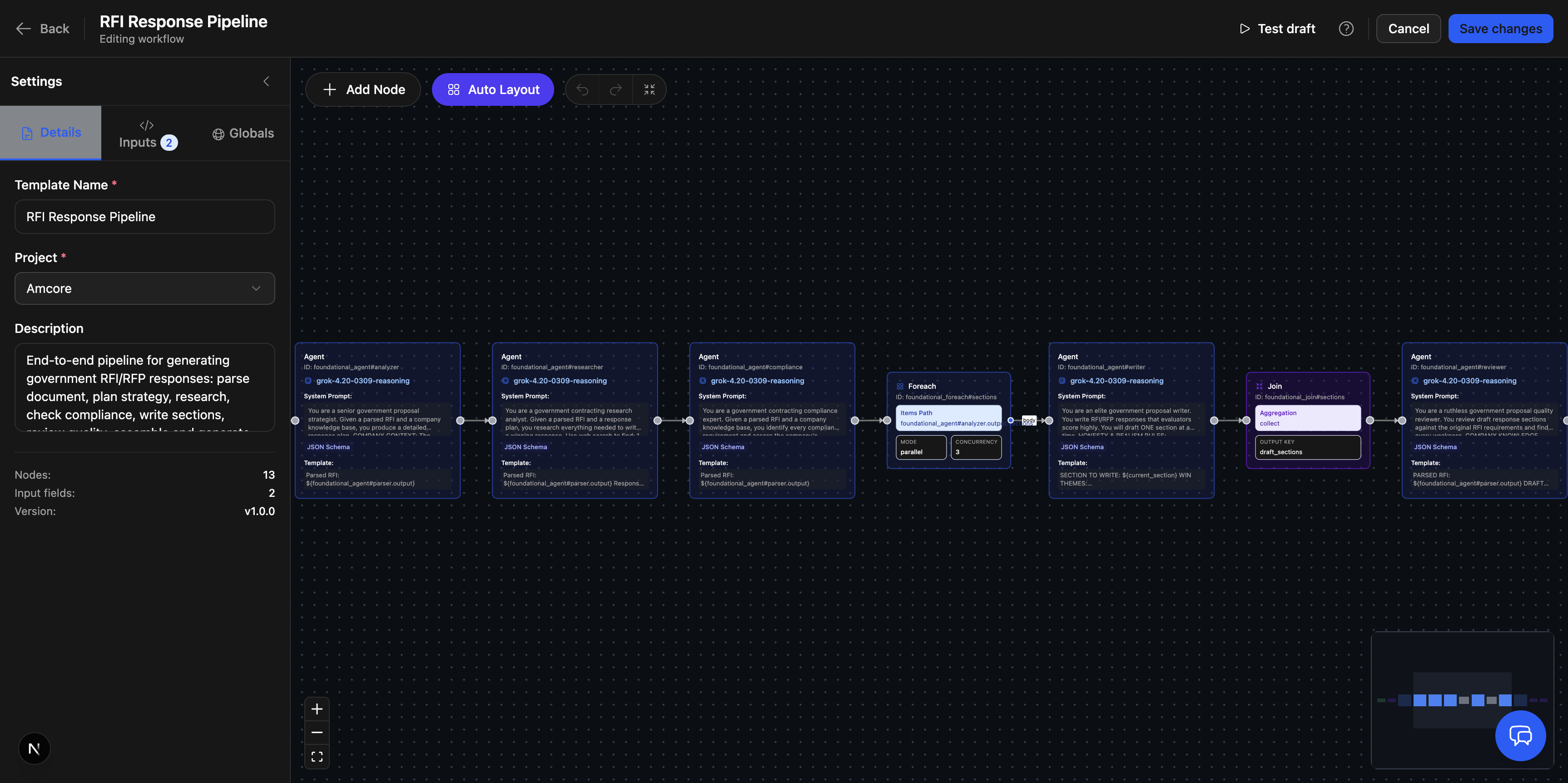

Multi-agent orchestration that shards

The orchestrator node fans planner → workers → finalizer with automatic token-budget sharding — not a sequential agent loop.

One platform. Everything else snaps on.

Pick your starting point.

Run multi-agent workflows on the Agentic Command Center. Or drop an Embedded Agent into the systems your team already lives in and see value the same day.

Platform

Agentic Command Center

The orchestration engine, governance layer, and integration backbone for every L2H deployment. Add Chat to put AI in front of users and Workflow Builder to let anyone author multi-agent workflows — all on top of any major LLM.

- Pre-built workflows with unlimited runs, plus MCP + REST extensibility

- Multi-agent orchestration with auto-sharding

- Chat + Workflow Builder add-ons on the same backbone

- Deploys to your AWS, Azure, GovCloud, or on-prem Kubernetes

Embedded Agents

AI inside the systems your team uses

Embedded Agents put the same multi-agent backbone right inside the tools your teams already live in. Shipping today for ServiceNow and VS Code, with more host platforms landing on the roadmap.

- 100% benchmarked KB accuracy on ServiceNow (59 tests, top model)

- Codebase-aware AI inside VS Code

- AI ↔ live agent handoff with full context

- Every major LLM · swap in a config table

Choose your path

Built for both worlds. Tailored to yours.

Pick the experience that maps to your buying motion — commercial enterprise or federal & defense.

For Commercial & Enterprise

Fortune 500 · Regulated · Channel

Customer-hosted on your AWS or Azure. SOC 2 / HIPAA / PCI / ISO 27001 aligned. Every major LLM. Channel-friendly procurement with MSA + DPA standard and pilot pricing for evaluation.

- ITSM · Financial Services · Recruiting · MSP · Media

- SAML 2.0 / OIDC / SCIM / mTLS for identity

- Multi-region with per-region LLM routing

- Resellers, GSIs, and SI-friendly delivery

For Federal & Defense

DoD · IC · Federal Civilian

In production at IL5 on GovCloud — and architected for air-gapped, classified-ready deployment — customer/sponsor holds the ATO. IL6+ ready. FedRAMP High aligned. GovCloud (DoD/DISA) configured for high-assurance environments. RFI/RFP automation and mission-ops workflows. Capability Statement and ATO support included.

- GSA / SEWP / CIO-SP4 vehicle support

- IL5 deployments on Azure GovCloud + private routing

- Air-gapped on-prem Kubernetes with self-hosted LLMs

- RFI/RFP automation and mission-ops workflows

Proven where it counts

Trusted across enterprise and defense.

1M+

Users served

100%

KB accuracy benchmark

Unlimited

Records per analysis

Any

Frontier or open-source LLM

Built for every role in the org

One platform. Every audience.

CIO / CTO

A single, customer-hosted platform for designing, running, and chatting with AI workflows — without LLM lock-in.

Head of AI

Stop stitching agents with notebooks. Ship multi-agent workflows with versioning, structured output, and a plugin SDK.

Platform Engineering

Infrastructure-as-code on AWS, Azure, or on-prem Kubernetes. Workload-identity-bound. Audit log. Done.

Workflow Author

Drag, drop, validate, run in Workflow Builder — or just chat with any LLM or any Agentic Command Center workflow.

Developer / Integrator

Self-describing workflows + scoped API keys. Discover, validate, run from any script.

Reseller / SI Partner

One product, every customer, every cloud. Fast delivery, IP protection, margin-friendly add-on.

Latest from L2H

Follow our work on LinkedIn.

Product updates, benchmarks, deployment stories, and field notes from defense and enterprise customers.

Take command of your AI strategy

Stop renting your AI capabilities from a single vendor. Deploy a platform you own, control, and can evolve on your terms.

Deploy on AWS, Azure, GovCloud, or on-prem Kubernetes